Below are some notes that I took while following Daniel Shiffman’s video tutorials on neural networks, which are heavily inspired by Make Your Own Neural Network, a book written by Tariq Rashid. The concepts and formulas here are not my original material, I just wrote them down in order to better understand and remember them.

Feedforward algorithm

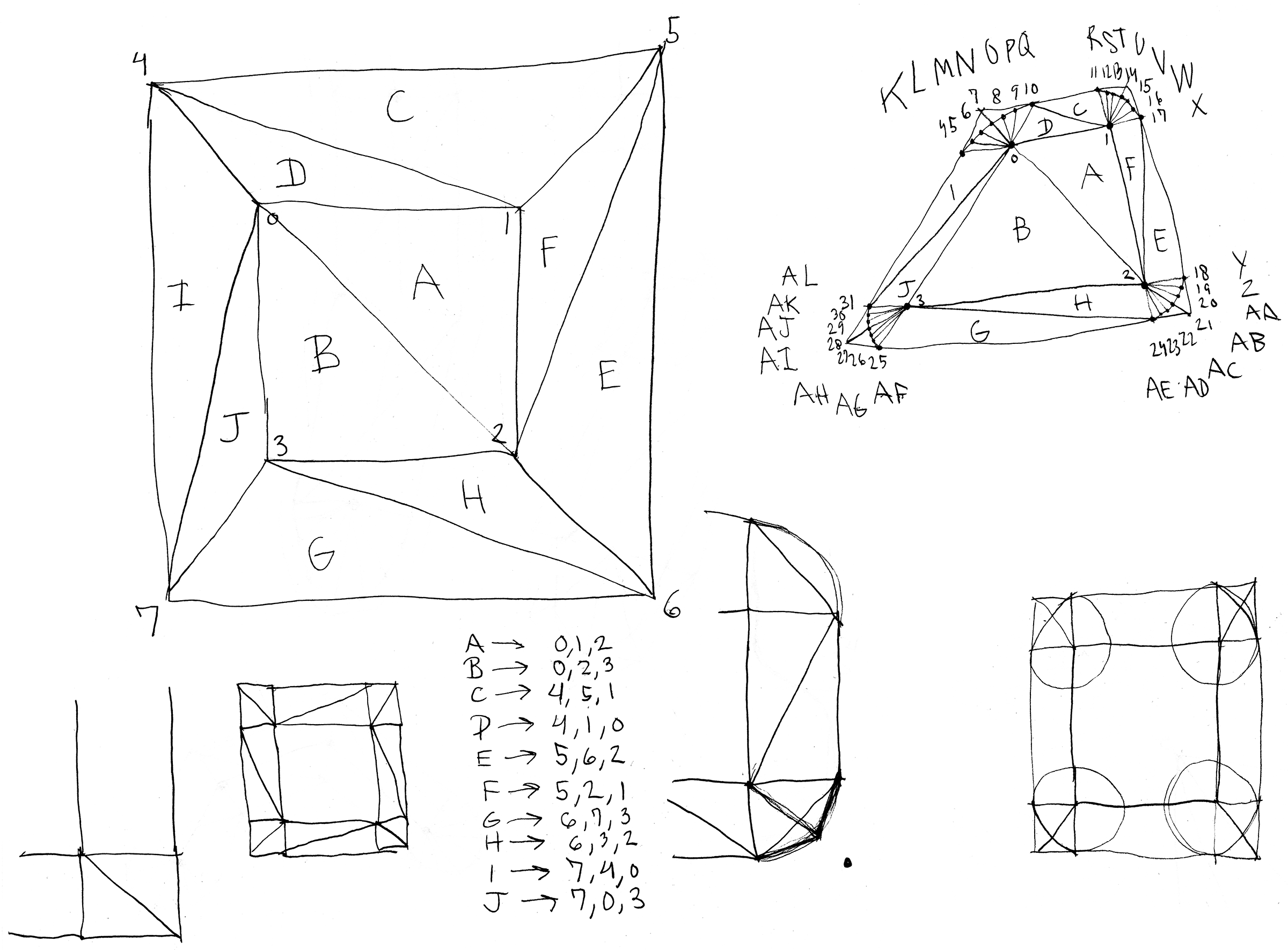

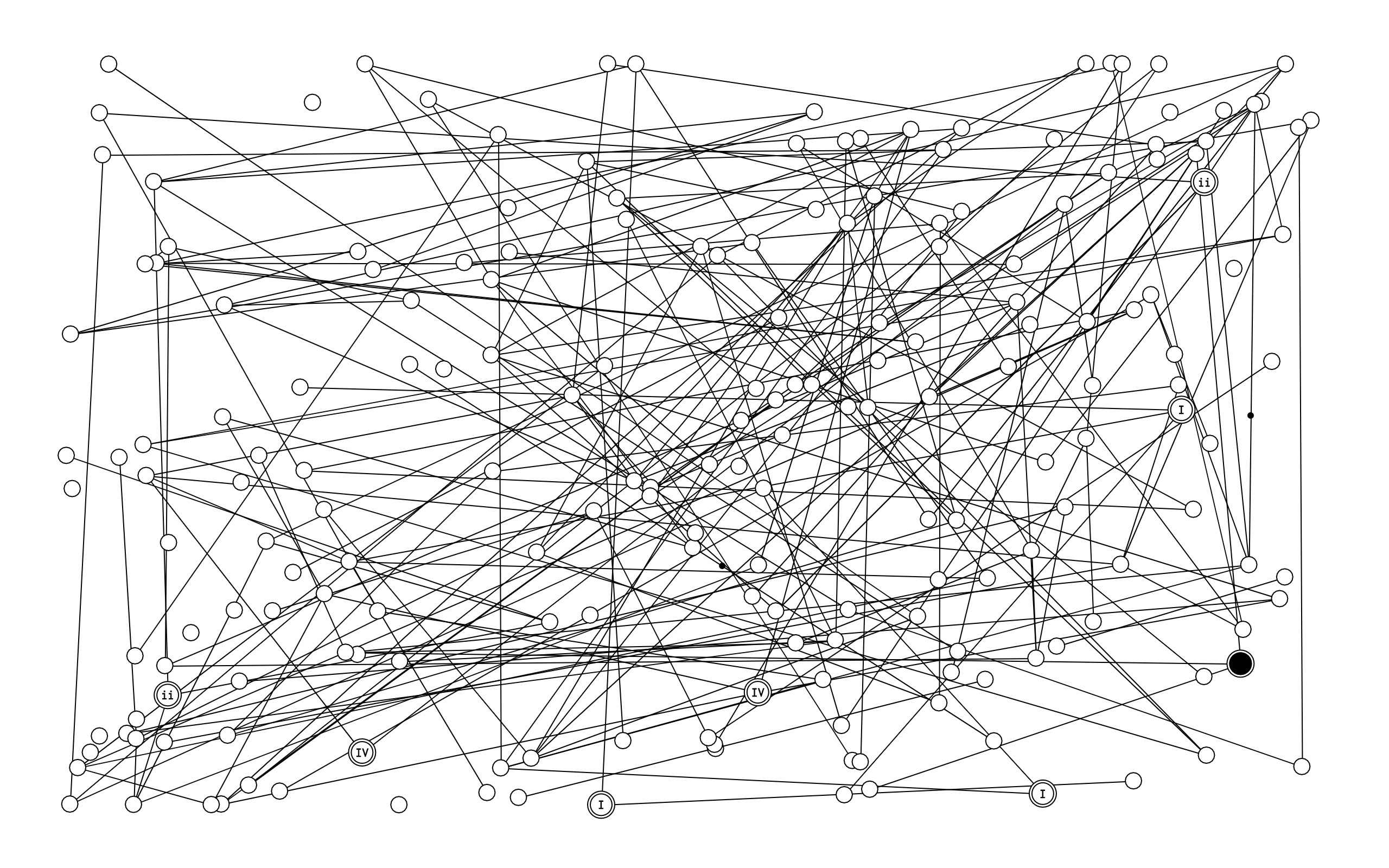

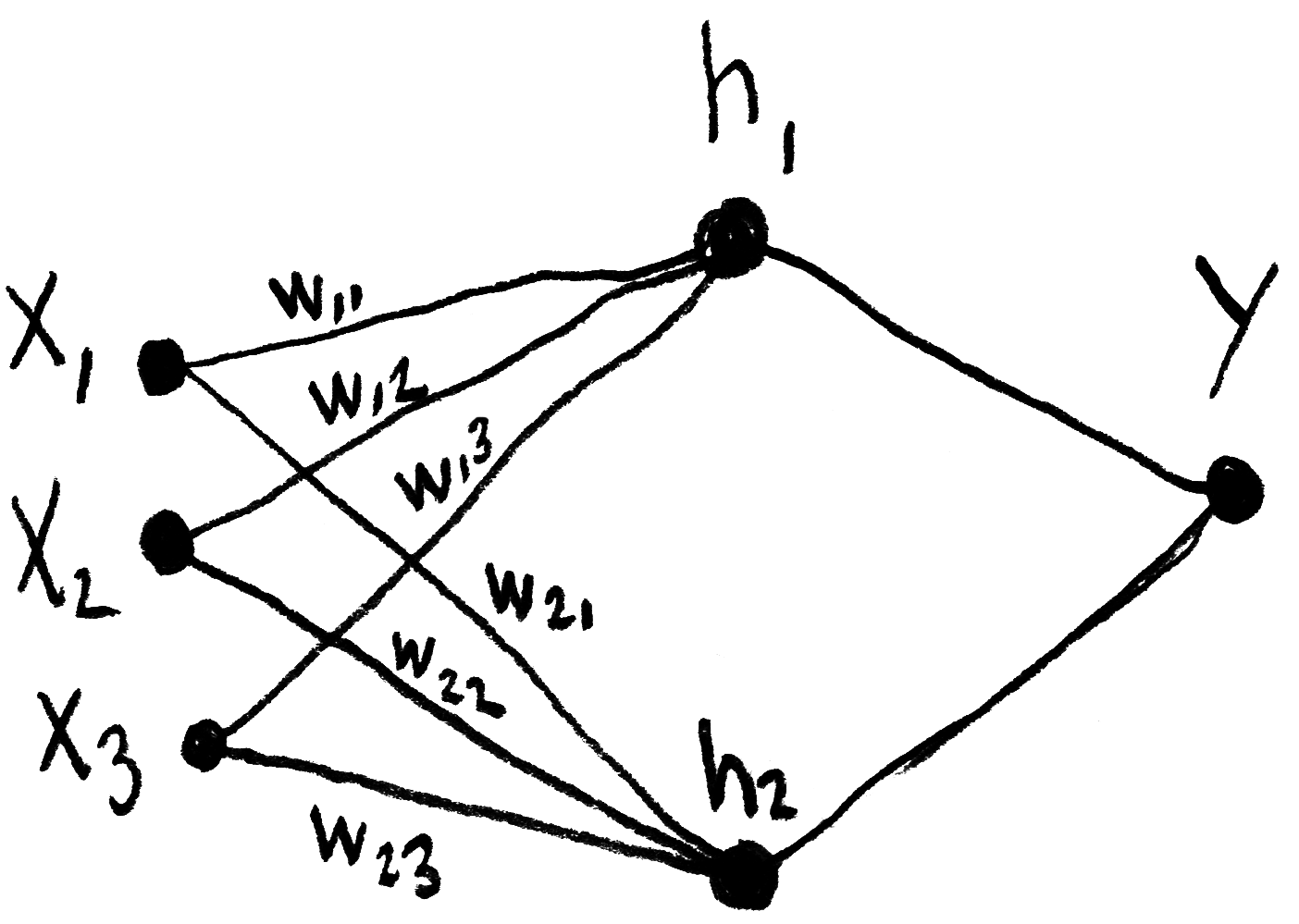

The calculations made by one the network’s layers, which takes into account its synaptic “weights”, can be represented by the matrix product written down below, in which h represents an intermediary layer (or “hidden layer”) of the network, w represents the weights and x represents the inputs. In this inverted notation, ⃗wij indicates the weight from j to i.

This product can also be represented thusly: